How to Lower Latency and Improve QoE

There are three certainties in life: death, taxes, and subscribers complaining that their Internet service is slow.

When ISPs receive those calls, the default reaction might be to suggest a plan upgrade to increase the speed (throughput) of the customer’s internet connection.

Or, they might enter into a time-consuming root cause analysis that involves finding out whether the issue is widespread across the network or local to the subscriber’s home. It might even mean a truck roll to try and isolate the problem.

Consider also that some people don’t like to complain. As a result, they might never contact their ISP when their internet connection is slow. They might simply assume that slowdown periods are just a fact of internet life. “It’s a busy time of day,” for example, or “I’m downloading a new game update right now, so that must be why it’s slow—we just have to wait it out.”

The good news for both ISPs and subscribers is that it doesn’t have to be this way.

A poor subscriber quality of experience (QoE) is not necessarily due to heavy peak time usage or download size. Instead, it’s often due to high latency caused by poor packet queue management and bufferbloat.

High latency is one of the leading causes of poor subscriber QoE. Reducing latency should be a key consideration for all ISPs. After all, QoE optimization leads to less churn, lower support costs, and happier customers.

What is Network Latency?

Before we look at how to lower latency, let’s define what it is and its causes and effects.

As our Fixed Wireless Glossary states, latency is “the time it takes for data to be transferred from its original source to its destination, measured in milliseconds.” In our case, Preseem tracks the round-trip time (RTT) of a request—the time it takes between clicking on a link and seeing the result, for example—to measure network latency. The higher the latency, the slower the response, and the poorer the experience for the user.

There are actually two kinds of latency—idle latency and working latency, also known as latency under load. As noted in a recent report by the Broadband Internet Technical Advisory Group, idle latency “reflects only the underlying attributes of the access network… as well as path distance, while the network is not being used.” Measuring idle latency can be helpful on a diagnostic level, but it’s not a significant factor for QoE.

Working latency, or latency under load, is how the user experience is truly defined. Our glossary notes that high latency “contributes to common issues like dropped video calls, buffering video, and slow web pages.” These are examples of latency under load issues. All of these can cause those ‘my internet is slow’ complaints mentioned above.

These problems became heightened during the pandemic as remote work and schooling made a consistently responsive internet all the more crucial. We’ve all by now endured the frustrating experience of a jittery or dropped Zoom call.

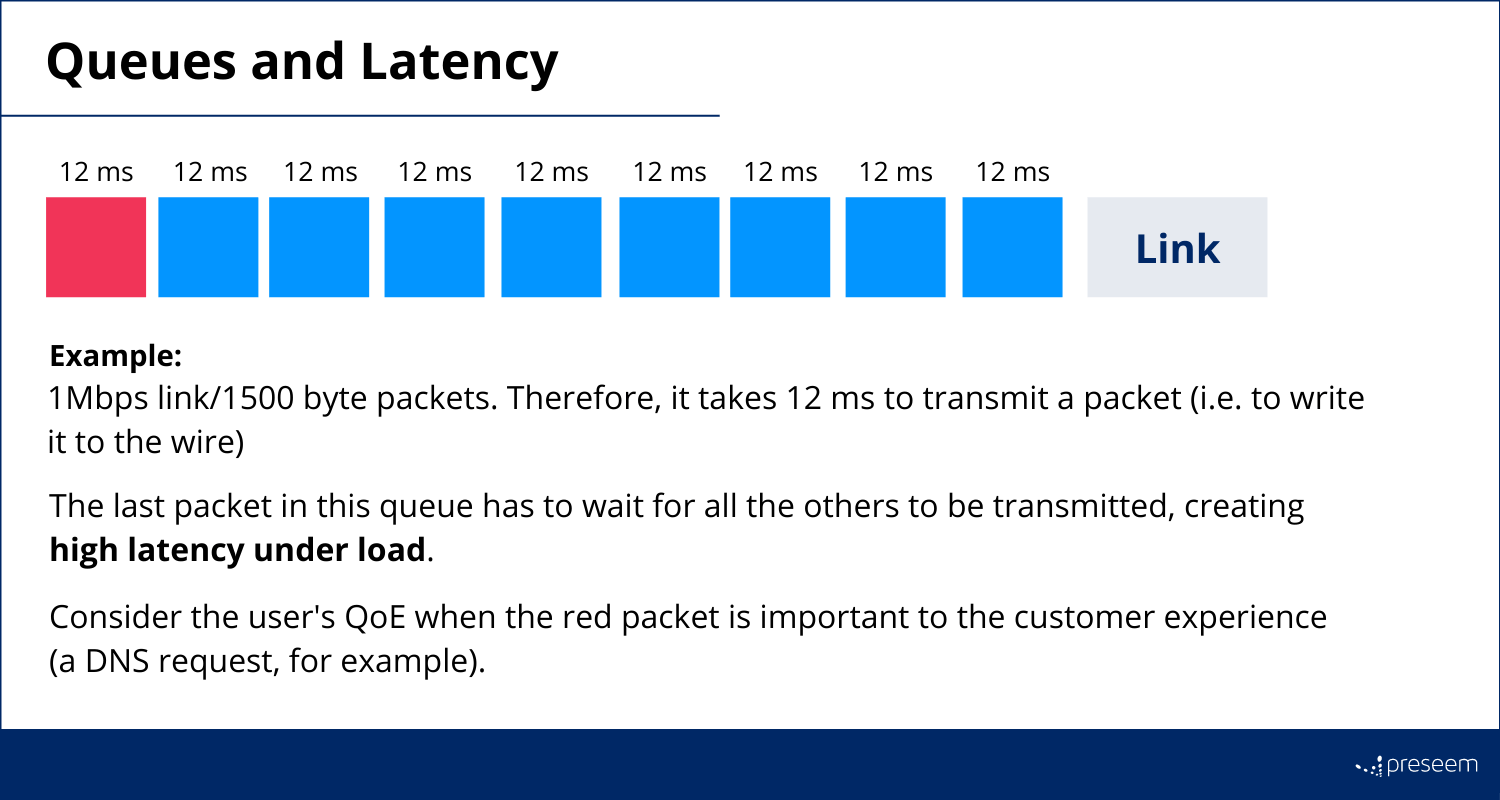

The majority of these issues are caused by queuing delays at bottleneck links.

Packet queues are a necessary component of any network—they’re not exclusively the problem. It’s the size of the queues and the queue management techniques at the bottleneck that cause high latency under load and hurt subscriber QoE.

Use AQM to Lower Latency

The easiest and most efficient way to lower network latency is to improve queue management. This is where active queue management (AQM) comes in. AQM is a traffic management technique that proactively drops packets before buffers become full. This allows routers to decrease queue sizes and reduce latency, even when multiple devices in the subscriber’s home are online.

The FQ-CoDel algorithm combines queue management with flow isolation. This ensures that interactive applications (online gaming) are not negatively affected by high-bandwidth activities (Netflix streaming). This further reduces the latency under load beyond what a single-queue AQM can achieve.

Some operators use Deep Packet Inspection (DPI) tools to investigate sluggish internet complaints. However, we’ve found that AQM is a more efficient method because:

- DPI can help identify which applications caused a slow internet experience. However, AQM solves the slow internet problem before it affects the customer in the first place.

- AQM allows ISPs to proactively identify and fix network issues, with no technical ‘geek knob’ know-how required.

- FQ-CoDel automatically assigns packets to bulk or interactive flows, so you can just set it and forget it instead of trying to keep up with constantly-changing applications and complicated rule updates to classify packets.

- Manual manipulation of subscriber traffic (treating some types of traffic in a non-neutral way) is a difficult feature to sell. It’s also more expensive in the long run, needing constant supervision in a changing internet environment. AQM frees up your time and delivers consistent results.

Lower Latency and Improve Performance

As noted in this article by Jason Livingood, Vice-President of Technology Policy and Standards at Comcast, moving to AQM can deliver “a huge improvement in user QoE, with many network latencies improving to 15 to 30 milliseconds.”

Most importantly, the internet will feel faster and more responsive for your customers, even under heavy usage. This means improved subscriber QoE, which translates to a happier customer base, fewer support calls, and more revenue for your business.

Providing low latency under load is a competitive advantage for your ISP. Throughput is easier to measure, but latency has an outsized impact on your subscriber’s perception of how fast their connection is. If you can consistently improve network latency and deliver internet that “feels fast” for users, then positive word of mouth will spread just as quickly.