Preseem recently held a technical webinar where our experts chatted about the common sources of network latency and how to combat them. They also looked at the changing nature of the transport layer and how the evolution from TCP to QUIC is changing the internet and how networks are managed.

The webinar was hosted by our co-founder and Chief Product Officer Dan Siemon and our Senior Product Manager Jeremy Austin. Scroll down to view the webinar in full or read on for an overview of their discussion.

Network Latency Sources

Don’t Worry About Propagation Delay

Some might think of network latency as the time it takes for information to move from one place to another, also known as propagation delay. In reality, however, this isn’t an issue for ISP networks. That’s because information moves at the speed of light in fiber (186,000 miles per second) or at the speed of electrons in copper (174,000 miles per second).

You can’t go faster than without inventing new physics 🙂 Unless your network spans across continents or oceans or is using geosynchronous satellites, then this isn’t something you need to worry about. Propagation delay simply isn’t an issue for typical residential and commercial internet providers.

Packet Buffering is the Largest Source of Latency

The real source of latency is buffering. As packets come in, they’re stored in a buffer. They move across to the output link via hardware, and then they’re transmitted. Buffer latency is the time the packet spends sitting in the piece of hardware, moving between ports and sitting in buffers. If you have copper in/fiber out, for example, the buffering while making the transition between media means you have to store packets. And packet storage for any length of time is what causes network latency.

Another source of latency is aggregation. When a wireless access point gets a packet, for example, it may choose to wait for another because then it can ‘amortize the cost’ of the RF frame across multiple packets.

That’s good for throughput, but the time spent waiting adds latency. The longer you wait, the bigger aggregate you form, whereas the less time you wait, the better latency. Finding a perfect balance between throughput and latency is a difficult task.

Packet Loss Can Add Application or User Latency

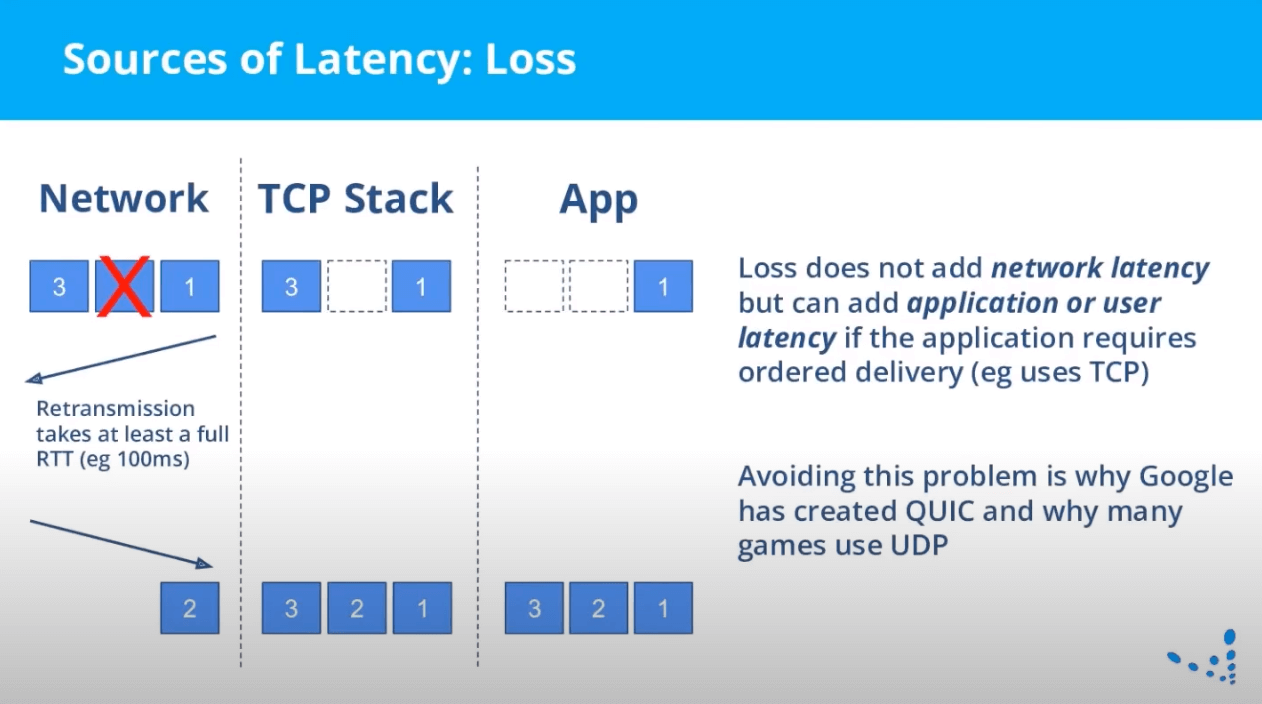

Another common source of latency is packet loss. This source is much more subtle and a little harder to understand. It’s really important in the context of transport protocol changes, however.

See the slide below. If two packets are moving down a link and Packet A is dropped, it doesn’t add latency to Packet B because they’re completely independent of each other. However, if they’re in the same transport connection, the application may perceive significant latency.

This happens when applications use TCP, which requires ordered delivery. So, loss itself doesn’t add network latency. It can, however, add application or user latency if the app in question uses TCP. This is why Google has developed QUIC and why many games now use UDP. More on QUIC below!

So Why Not Just Get Rid of Packet Buffers?

If packet buffers are the main source of latency, why not just get rid of them? Well, as you probably know, this isn’t possible! Buffers are absolutely necessary for performance in a packet network.

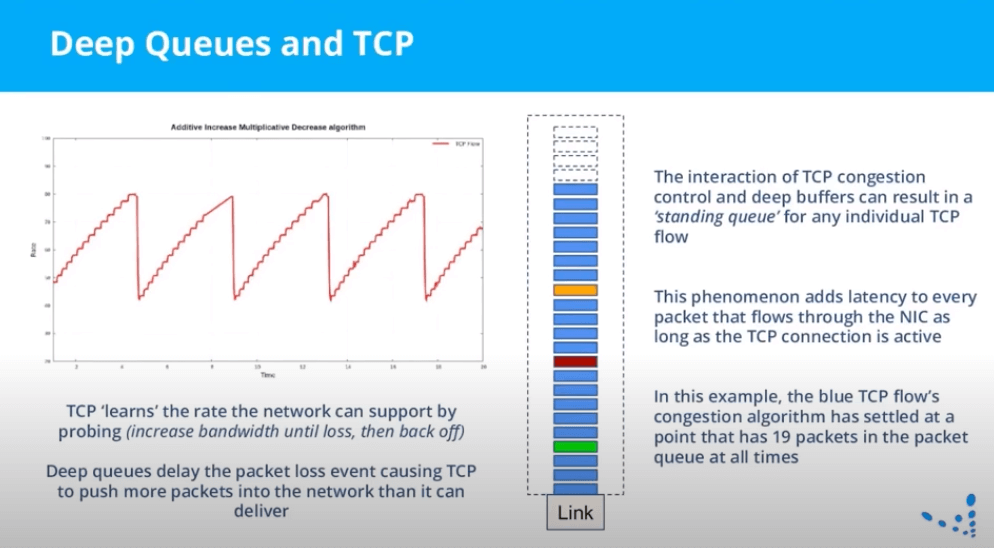

If you didn’t have buffers and another packet arrived, the second packet would have to be dropped. This would make a link idle once the first packet is transmitted, causing low link utilization and wasted bandwidth. Packets have queues so that the link never goes idle. The problem comes when queues are allowed to get too big, which can then add latency. See the slide below for an example of this.

Deep Queues and TCP

TCP measures the ability of the network to hold packets. If you have a really big queue, you can get a situation where it’s always sending too much and the queue never drains.

Big, unmanaged buffers (aka Bufferbloat) is why the internet gets slower as a link nears capacity. When a queue starts to back up packets, latency and loss creeps up. People perceive this as fundamental to how the internet performs, i.e. that when a link is at 90%, the experience is bad. This isn’t true, however. It’s actually just due to poor queuing on the interfaces that are transmitting to that link.

This leads to something of a ‘Goldilocks problem.’ That is, you need to have buffers to have a really good throughput, but if you have too many buffers, you get too much latency. In the network world, we’ve been very focused on throughput and not on latency, so buffers keep getting bigger and latency keeps getting worse. So how to solve for latency?

Active Queue Management

Active Queue Management techniques using the FQ-CoDel algorithm dynamically size the queue and optimize for good latency and throughput at the same time. This is a very significant change and one that remains the state of the art for individual queue management. AQM solves the problem at its root in a fundamentally simpler way instead of adding complexity and workarounds. Bottom line: There are techniques that allow packet networks to have a great experience even under 100% load. FQ-CoDel and AQM are examples of that.

Transport Layer: The Internet is Changing

During the webinar, Dan and Jeremy also walked through the history of the transport layer, from HTTP1 onward. One of the latest and most important developments is the introduction of QUIC. This is essentially a new general-purpose replacement for TCP. Standardized in May 2021, QUIC is now supported by all major browsers and libraries, and extensively used by Google.

QUIC provides all the services of TCP with added benefits. If a packet is dropped, for example, it has no impact on the delivery to the application of other packets. This makes a big difference in overall web performance and will be how most new applications will be built. There will always be TCP in a network, but QUIC will soon become the standard.

TCP Acceleration

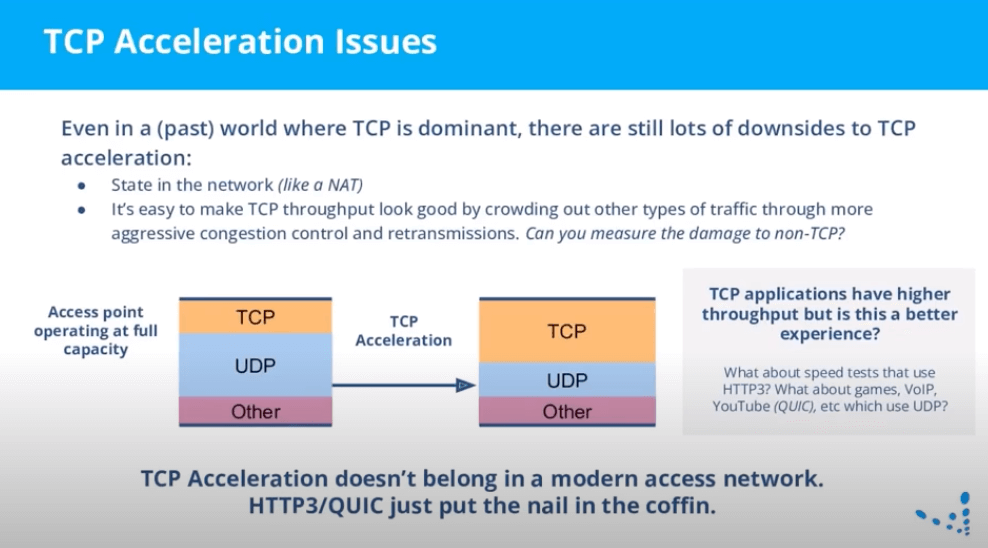

Our hosts then took a look at TCP acceleration and some of its inherent drawbacks. These include the fact that it’s hard to do TCP congestion in a way that’s fair but that still gets you good throughput.

Consider an access point at full capacity. Anything that’s done to make TCP better necessarily makes everything else worse. The bandwidth has to come from somewhere if the link is already busy. If you’re not measuring the impact to everything else at the same time, the net effect is going to be a worse customer experience.

TCP and QUIC’s congestion control are designed to be compatible. They behave roughly the same and they should share a link equally. If you have an access network that needs TCP acceleration to deliver a good experience, it’s guaranteed that QUIC is not getting a good experience because something entirely encrypted like QUIC can’t use a middle box. So if your network needs TCP acceleration to make Netflix happy, you can bet the YouTube QUIC traffic is already in pain because it’s seeing all the same packet loss and transmissions as the TCP is, and there’s no way to fix it in that situation.

The Benefits of QUIC

So much traffic is going to be in the QUIC protocol now, that if you’re relying on TCP acceleration for a good experience, you’re setting things up for a net negative experience across your customers. The importance of TCP traffic is going down because the major apps are switching to QUIC, and these are the apps your users care about the most.

In essence, if you rely on TCP acceleration to make the experience better, you’re just papering over the underlying problems in the access network that should be fixed to make everything better for everyone without needing protocol- or application-specific complexity. TCP acceleration, DPI, and others are traffic management but are not QoE, and don’t really measure and improve subscriber quality of experience.

View the full webinar below!